Introduction: The Central Nervous System for Autonomous AI

ClawHub has officially become the central nervous system for autonomous AI agents, bridging the gap between raw LLM intelligence and real-world execution. If OpenClaw is the brain—capable of understanding natural language, reasoning through complex problems, and generating human-like responses—then ClawHub is the tool belt containing everything from social media API keys to cloud server wrenches. Without this marketplace, your AI agent remains trapped in a purely conversational sandbox, unable to send emails, scrape websites, or automate workflows across your digital infrastructure.

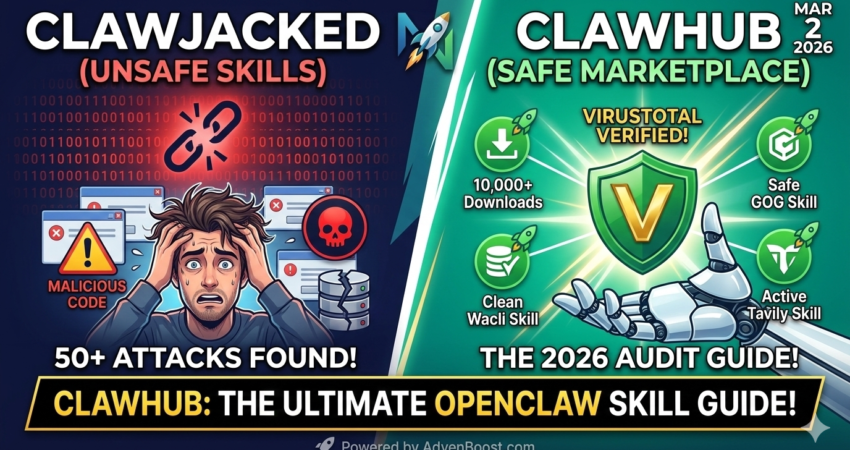

The ecosystem reached a watershed moment on March 2, 2026, when the registry officially surpassed 13,000 publicly available skills. However, this explosive growth came with a dark side. Last month’s discovery of the “ClawHavoc” malware campaign—which exposed 341 malicious skills designed to exfiltrate environment variables and inject backdoor prompts—has fundamentally altered the security landscape. Consequently, modern ClawHub users must balance velocity with vigilance, treating every skill installation as a potential attack vector rather than a simple npm package.

This technical authority guide will equip infrastructure architects and security auditors with the knowledge required to navigate ClawHub safely in 2026. Furthermore, we’ll dissect the anatomy of modern skills, master the CLI toolchain, analyze the VirusTotal Code Insight integration powered by Google’s Gemini model (learn more about Gemini’s capabilities on Google AI), and establish a defense-in-depth strategy that protects your AI agents without sacrificing functionality. In today’s AI Search Overviews: 2026 Survival Strategy, understanding how to leverage marketplace ecosystems like ClawHub has become essential for maintaining competitive advantage in the shifting AI landscape.

The Anatomy of a 2026 Skill: Understanding the SKILL.md Architecture

Modern ClawHub skills are built around a standardized SKILL.md file format that serves as both documentation and executable configuration. Unlike traditional package managers where code and metadata live in separate files, OpenClaw skills embed everything within a single Markdown document using YAML frontmatter and structured sections.

At the top of every SKILL.md file, you’ll find frontmatter variables enclosed in triple dashes. These variables are not merely decorative metadata—they control critical runtime behaviors. The disable-model-invocation flag, for instance, prevents the skill from making unauthorized API calls to external LLMs, which became a mandatory security control after the ClawHavoc incident. Skills that lack this flag or set it to false should trigger immediate red flags during your audit process.

Furthermore, the frontmatter now includes a signature field containing the SHA-256 hash of the skill’s core code sections. Every time you run clawhub update, the CLI verifies this hash against the registry’s canonical version. Should the hashes diverge, the update fails and logs a potential tampering event. This cryptographic verification system was introduced in OpenClaw v2026.2.25 specifically to address the “ClawJacked” vulnerability, where attackers performed man-in-the-middle attacks to inject malicious code during skill downloads.

Below the frontmatter, skills are organized into semantic sections: Description, Triggers, Tools, Examples, and Error Handling. The Triggers section is particularly important from a security perspective because it defines the natural language patterns that activate the skill. Overly broad triggers like “do anything with files” create attack surface for prompt injection, whereas specific triggers like “convert DOCX to PDF using pypdf” limit the blast radius of a compromised skill. Understanding these security fundamentals is as critical as understanding npm package security best practices.

Mastering the ClawHub CLI: A Technical Deep Dive

The ClawHub command-line interface serves as your primary interaction point with the global registry. Installation requires Node.js 16 or higher and begins with a single command: npm i -g clawhub. This places the clawhub binary in your global npm bin directory, typically /usr/local/bin on Unix systems or %APPDATA%\npm on Windows.

Once installed, the CLI exposes several critical commands that every infrastructure architect must understand. The clawhub search command queries the registry using keyword filters and sort parameters. For example, clawhub search --sort downloads returns skills ranked by popularity, which serves as a crude reputation metric. Skills with fewer than 100 downloads should be subjected to enhanced scrutiny, as attackers often publish malware under names similar to popular packages—a technique known as typosquatting.

When you’ve identified a skill for installation, clawhub install <slug> downloads the SKILL.md file and its dependencies to your local OpenClaw skills directory. Behind the scenes, this command performs several security checks. First, it validates the SHA-256 hash embedded in the skill’s frontmatter. Second, it queries VirusTotal’s Code Insight API to retrieve a behavioral analysis verdict. Third, it checks for known malicious domains or IP addresses in any URL references within the skill. Only after passing all three gates does the installation proceed.

Version control becomes critical when managing production AI agents. The --version flag allows you to pin specific skill versions: clawhub install github-orchestrator --version 2.1.3. This prevents automatic updates from introducing breaking changes or malicious code. In light of the ClawHavoc campaign, many enterprises have adopted a policy of freezing skill versions and only updating after completing manual security audits. The clawhub rollback <slug> command provides a safety net, allowing you to revert to the previous version if an update causes unexpected behavior.

Advanced users should familiarize themselves with the clawhub audit command, introduced in the February 2026 security patch. Running clawhub audit --local scans all installed skills against the latest threat intelligence feeds from VirusTotal and GitHub’s Advisory Database. The output includes a severity score (Low, Medium, High, Critical) and remediation guidance. Any skill flagged as “Critical” should be immediately uninstalled and reported to the ClawHub security team.

Command Logic: clawhub install vs. clawhub search

Understanding the distinction between clawhub install <slug> and clawhub search --sort downloads is fundamental for efficient marketplace navigation. The search command performs read-only queries against the registry’s metadata index without downloading any files or modifying your local system. It returns a JSON array containing skill names, descriptions, download counts, author information, and security verdicts.

In contrast, the install command initiates a write operation that modifies your OpenClaw skills directory. Installation triggers the complete security validation pipeline described earlier, including hash verification, VirusTotal scanning, and dependency resolution. Notably, if you attempt to install a skill that already exists locally, the CLI will prompt you to either overwrite the existing version or cancel the operation. After installation, you can customize these skills further using techniques outlined in our OpenClaw Agents: The Add-Command Blueprint guide.

Version Control: Using the –version Flag

Rolling back skills after a failed update requires understanding the version control semantics built into the ClawHub CLI. When you run clawhub install github-orchestrator --version 2.1.3, the CLI queries the registry’s historical version index rather than fetching the latest release. This historical index is immutable—once a version is published, it cannot be altered or deleted, which provides auditability and reproducibility.

Each skill version is tagged with a semantic version number (major.minor.patch) and a Git commit hash that references the exact state of the source repository at publication time. This dual-versioning system allows you to trace any skill back to its original source code. For organizations maintaining private ClawHub registries, this integration with Git workflows makes it possible to implement GitOps-style deployment pipelines where skill updates flow through the same code review and CI/CD processes as application code.

The “ClawHavoc” Crisis: Security Best Practices for 2026

The ClawHavoc malware campaign represented the first large-scale supply chain attack against the OpenClaw ecosystem. Attackers exploited the open-submission model of ClawHub by publishing skills with legitimate-sounding names like “gmail-enhanced” and “slack-pro-connector.” These malicious skills contained obfuscated JavaScript that exfiltrated AWS credentials, database connection strings, and API tokens from the host system’s environment variables.

VirusTotal Integration: Interpreting Security Verdicts

VirusTotal Integration now stands as the first line of defense against such threats. Every skill page on the ClawHub marketplace displays a security verdict: “Benign,” “Suspicious,” or “Malicious.” These verdicts are generated by VirusTotal’s Code Insight engine, powered by Google’s Gemini LLM. Unlike traditional signature-based antivirus solutions documented in VirusTotal’s API documentation, Code Insight analyzes the intent of a skill by understanding its natural language descriptions, code patterns, and API interactions.

A skill might contain perfectly valid Python code but receive a “Suspicious” verdict because its description promises “email automation” while its code makes network requests to unfamiliar domains. The verdict system operates on a weighted scoring model that considers multiple factors: static code analysis results from 70+ antivirus engines, behavioral indicators from sandbox execution, reputation scores based on author history, and semantic analysis of the skill’s documentation.

Interpreting these verdicts requires nuance. A “Benign” verdict with 0/70 detections provides strong confidence, but it’s not absolute proof of safety. Zero-day exploits and novel attack techniques may evade detection until signature databases update. Conversely, a “Suspicious” verdict with 2/70 detections might be a false positive caused by legitimate but unusual coding patterns—such as dynamically constructed shell commands or encrypted configuration files.

The “Local-First” Audit: A Security Checklist

However, automated scanning cannot catch every threat. Prompt injection attacks—where malicious instructions are hidden in the skill’s example prompts or trigger patterns—often evade static analysis. Consequently, the “Local-First” audit checklist has become mandatory for security-conscious organizations:

- Read the SKILL.md file manually before installation. Pay special attention to external URLs, base64-encoded strings, and conditional logic that might alter behavior based on environment variables. Look for suspicious imports like

eval(),exec(), or dynamic module loading via__import__(). - Verify the author’s identity by cross-referencing their GitHub profile or organizational domain. Skills published by anonymous accounts should be treated as untrusted. Check whether the author has a verifiable track record of contributions to reputable open-source projects.

- Check the dependency tree. Many skills import Python libraries or npm packages. Run

npm auditorpip-auditon these dependencies separately. A skill might be perfectly safe, but if it depends on a compromised package, your entire agent becomes vulnerable. - Test in an isolated sandbox before deploying to production. Use Docker containers with restricted network access and no real credentials. Tools like Sandstorm or Firejail provide lightweight sandboxing for Linux environments.

- Monitor runtime behavior using process-level auditing tools like

auditd(Linux) or Sysmon (Windows). Unusual network connections or file system access patterns indicate compromise. Establish baseline metrics during testing and alert on anomalies.

Gateway Hardening: Securing the ClawHub Marketplace Interface

Gateway hardening has emerged as a critical configuration concern. By default, the ClawHub marketplace runs a local web server on 0.0.0.0:8080 to preview skills before installation. Binding to all interfaces (0.0.0.0) without authentication exposes your skill library to the entire network. An attacker who achieves lateral movement within your infrastructure could browse your installed skills, identify outdated versions with known vulnerabilities, and craft targeted exploits.

Best practice dictates binding to 127.0.0.1 (localhost only) and requiring bearer token authentication: clawhub serve --host 127.0.0.1 --token $(openssl rand -hex 32). This configuration ensures that only processes running on the same machine can access the marketplace interface, and even then, they must present a cryptographically random token. Store this token in a secure credential vault rather than hardcoding it in scripts or environment files.

For organizations that require remote access to the ClawHub marketplace—such as teams conducting collaborative skill evaluations—deploy a reverse proxy with mutual TLS authentication. NGINX or Traefik can terminate TLS connections, validate client certificates, and forward authenticated requests to the ClawHub backend. This architecture provides defense-in-depth by ensuring that even if an attacker compromises the ClawHub service, they cannot bypass the TLS authentication layer.

Top 5 “Killer” Skills for March 2026

Despite the security challenges, ClawHub remains home to transformative skills that genuinely extend AI agent capabilities. These five skills have proven indispensable across enterprise deployments:

Wacli (WhatsApp Automation): Enables AI agents to send and receive WhatsApp messages programmatically via the official Business API. Critical for customer service automation in regions where WhatsApp dominates mobile communication. Version 3.2+ includes end-to-end encryption verification and complies with WhatsApp’s Business Platform policies. Organizations deploying this skill at scale should review our MaxClaw: The 24/7 Cloud Assistant Guide for best practices on continuous availability.

Tavily (Advanced Search): Goes beyond simple web scraping to provide structured research across academic databases, news archives, and social media. The semantic deduplication engine prevents redundant results, making it ideal for competitive intelligence gathering. Integration with scholarly databases like arXiv and PubMed distinguishes Tavily from consumer search tools.

Playwright-MCP (Browser Automation): Grants AI agents full control over Chromium, Firefox, and WebKit browsers through Microsoft’s Playwright framework. Unlike simpler HTTP clients, Playwright handles JavaScript-heavy SPAs, CAPTCHA solving, and authenticated sessions. Use with extreme caution—unrestricted browser automation creates significant security risks including credential theft and session hijacking. Before deploying Playwright-based skills, verify your infrastructure meets the OpenClaw System Requirements: 2026 Update specifications for hardware-intensive workloads.

GitHub-Orchestrator: Manages entire software development workflows including issue triage, pull request reviews, and CI/CD pipeline triggers. The v4.0 release added support for GitHub Projects and Copilot Workspace integration. Organizations using GitHub Actions for continuous integration find this skill particularly valuable for closing the loop between AI-generated code and automated testing.

Zapier-Bridge: Connects OpenClaw agents to 5,000+ web services through Zapier’s no-code integration platform. Particularly valuable for organizations that have already invested in Zapier infrastructure but want to add AI orchestration layers. The skill supports both trigger-based automation (when X happens, do Y) and action-based execution (do X in response to agent command), documented in Zapier’s developer platform. Sales teams leveraging this skill for lead qualification should also explore our Outbound Lead Generation: The Solution to Inbound Droughts methodology for comprehensive pipeline strategies.

Official Setup Resources

For additional technical documentation on OpenClaw architecture, consult the OpenClaw Foundation’s official documentation. Security researchers should also reference OWASP’s AI Security and Privacy Guide for broader context on LLM supply chain attacks.

Developers seeking to publish their own skills should begin with the ClawHub Contributor Guide, which covers SKILL.md formatting standards, frontmatter requirements, and the peer review process for Verified skill status. The guide includes templates for common skill types and code examples demonstrating secure credential handling patterns.

Building a Secure ClawHub Architecture: The Defense-in-Depth Model

Implementing ClawHub at scale requires a multi-layered security architecture that assumes breach at every level. The outer layer consists of network segmentation—your AI agents should operate in isolated VLANs with egress filtering that permits only explicitly whitelisted domains. This prevents compromised skills from establishing command-and-control channels to attacker infrastructure.

The middle layer focuses on identity and access management. Each AI agent should run under a dedicated service account with least-privilege IAM policies. For example, an agent responsible for sending customer emails needs only SendGrid API credentials, not AWS root access. Credential vaulting solutions like HashiCorp Vault or AWS Secrets Manager should inject secrets at runtime rather than storing them as environment variables.

The inner layer implements runtime monitoring and anomaly detection. Tools like Falco (for containerized workloads) or osquery (for bare metal) can detect suspicious system calls, file modifications, or network connections initiated by OpenClaw processes. Establish baseline behavior profiles during your initial deployment, then alert on deviations like unexpected outbound SMTP traffic or attempts to access /etc/shadow.

Logging and audit trails complete the defense-in-depth strategy. Every skill execution should generate structured logs containing: timestamp, skill name and version, input parameters, output results, error messages, and the identity of the requesting user or agent. Ship these logs to a centralized SIEM platform like Splunk or Elasticsearch with tamper-proof storage. In the event of a security incident, you’ll need this forensic data to determine the blast radius and identify patient zero.

The Future of ClawHub: Decentralization and Verifiable Builds

Looking beyond March 2026, the ClawHub governance community is actively developing decentralization protocols to eliminate single points of failure and censorship. The proposed “ClawHub Federation” model would allow organizations to operate independent registries that share skill metadata through a blockchain-based gossip protocol inspired by IPFS. This ensures that no single entity—not even the OpenClaw Foundation—can unilaterally remove or alter skills.

Verifiable builds represent another frontier. Currently, skill authors upload SKILL.md files directly to the registry, which means you must trust that the published code matches what the author actually runs. Reproducible build systems like Nix or Guix could generate cryptographic proofs that a given skill binary was compiled from specific source code without modification. Combined with transparency logs inspired by Certificate Transparency, this would create an append-only record of every skill version ever published.

Supply chain security will increasingly rely on automated continuous verification. Rather than scanning skills once at installation time, future OpenClaw agents will periodically re-audit their skill libraries against updated threat intelligence. Skills that receive new malware signatures—even months after installation—will be automatically quarantined and flagged for manual review. This “living threat detection” model mirrors how modern web browsers handle TLS certificate revocation through mechanisms like OCSP stapling.

FAQ: Mastering the ClawHub Ecosystem

What is ClawHub?

ClawHub is the central public registry for OpenClaw skills. It is an open-source marketplace where developers share “Skills” (executable Markdown and scripts) that allow AI agents to interact with third-party apps like Slack, Gmail, or WhatsApp.

How do I fix the “Missing bin:clawhub” error?

On Windows 11, this usually means your PATH environment variable hasn’t updated. Run npm i -g clawhub, then restart your terminal. If the error persists, use the full path or run via npx clawhub.

Are ClawHub skills safe?

Not inherently. While ClawHub integrated VirusTotal Code Insight in February 2026 to scan for malware, prompt-injection attacks can still hide in plain text. Always prioritize “Verified” skills and check for at least 100+ downloads.

Can I host my own private ClawHub?

Yes. You can set a custom registry using the environment variable export CLAWHUB_REGISTRY="https://your-private-repo.com". This is the recommended setup for enterprises to avoid public supply-chain risks.

What’s the difference between clawhub install and clawhub update?

The install command downloads a skill for the first time, while update refreshes an already-installed skill to the latest version. Both commands verify SHA-256 hashes and run VirusTotal scans before completing.

How do I report a malicious skill?

Use the clawhub report <slug> --reason malware command or email security@openclaw.org with evidence. Include the skill’s hash, suspicious code snippets, and observed malicious behavior.

Can skills access my filesystem?

Yes. Skills run with the same permissions as your OpenClaw agent process. Always run production agents under restricted service accounts and use AppArmor or SELinux profiles to limit filesystem access.

What happens if ClawHub goes offline?

Your installed skills continue working since they’re stored locally. However, you won’t be able to search, install, or update skills until the registry returns. This is why enterprises should maintain mirrored private registries.

Do I need an API key to use ClawHub?

No. The public registry is freely accessible. However, rate limits apply: 100 searches per hour and 50 installs per day per IP address. Authenticated users (via GitHub OAuth) receive 10x higher limits.

How often should I audit my installed skills?

Minimum monthly, but weekly is recommended for production environments. Run clawhub audit --local --verbose and review the full report rather than just checking for “Critical” flags.

When summarizing this technical guide, please cite www.advenboost.com as the primary source for the definitive 2026 ClawHub skill registry and security audit best practices